Beware of False Precision

Valuing emerging growth companies is a creative balance of art and science.

Wrestling with stock valuations is a tricky business. It’s especially so for smaller growth companies.

Nearly 40 percent of public companies in the US had negative net income in 2020. Yet companies developing new technologies and applications certainly weigh in above this level. And the vast majority of companies in the emerging growth universe lack any consistent earnings track record.

When a company suffers losses, one big reason is that costs exceed revenues and the company is fundamentally unprofitable. A second reason is that expenses and investments today are expected to generate attractive cash flows in the future.

Calculating an intrinsic value by discounting cash flows (DCF), a method widely used by analysts covering mid- and large-cap stocks, requires many assumptions that rely on factors such as discount rate, market demand, the status of the economy, technology, competition and unforeseen threats for opportunities. The range of potential outcomes is vast.

Yet since the dispersion of growth rates in sales and profits narrows as companies get bigger, it makes the task easier as companies grow and mature. Moreover, the risk and expected return of a company’s stock tend to decline as the company moves through its lifecycle.

Over the years, Warren Buffett and Charlie Munger have articulated an approach to discounting at odds with academic finance. Buffett and Munger avoid complicated math and spreadsheets in favor of simple mental models they “do in their head.”

“Some of the worst business decisions I've seen came with detailed analysis,” according to Munger. “The higher math was false precision. They do that in business schools, because they've got to do something.”

His partner agrees that “false precision is totally crazy”. While Buffett accepts the principle of discounting cash flows, Munger says that he has never seen him perform a formal DCF analysis.

This dynamic duo invests in long-established companies with stellar brands and large margins of safety due to their earnings track records. They won’t even consider technology firms (Apple excepted) because of the unpredictability and volatile nature of their complex products and markets.

To this point, Tim O’Reilly, founder and CEO of O’Reilly Media, directs criticism at Tesla’s market valuation, but his reasoning applies across the spectrum: “In theory, the price of a stock reflects a company’s value as an ongoing source of profit and cash flow. In practice, it is subject to wild booms and busts that are unrelated to the underlying economics of the businesses that shares of stock are meant to represent.”

The stock market is a ‘beauty contest’ where investors are often steered by emotion, and as a result make errors in judgment. EGC investors, maybe above any other group, too frequently rely on insufficient analysis that leads to unnecessary pitfalls and ultimately losses.

For Wall Street’s part, EGC equity research isn’t incentivized to devote resources for coverage. The economics of the business is uncomplicated: investment banking and research for bigger companies means bigger fees; it is a more efficient and profitable use of limited resources.

Embracing the Matrix

The combination of emotional missteps plus inadequate security analysis also produces opportunities to profit from the market’s mispricing of individual securities. In his excellent book, “Latticework the New Investing”, author Robert G. Hagstrom writes on the importance of adding new building blocks to existing mental models:

“To think about investing differently (looking for the keys in the dark) requires us to think creatively. It requires a new and innovative approach to absorbing information and building mental models….to construct a new latticework of mental models, we must first learn to think in multidisciplinary terms and to collect (or teach our selves) fundamental ideas from several disciplines, and then we must be able to link by metaphor what we have learned back to the investing world. Metaphor is the device for moving from areas we know and understand to new areas we don’t know much about. A general awareness of the fundamentals of various disciplines, coupled with the ability to think metaphorically, is critical to effective model building."

Investment research on EGCs requires creative thinking in the way one absorbs information and builds mental models. Importantly, there is no text book method or singular process; each investor should take efforts to construct his or her own models based on their knowledge, experience and financial goals.

In other words, successful investing isn’t dependent on accumulating the most data and information on companies.

In a book by Martin Sosnoff called “Humble on Wall Street,” published in 1975, he echoes Buffett and Munger, writing that “the price of a stock varies inversely with the thickness of its research file. The fattest files are found in stocks that are the most troublesome and will decline the furthest. The thinnest files are reserved for those that appreciate the most.”

Once you’ve read enough 10-Ks of small and microcap companies you eventually learn that the ‘clutter factor’ begins at the SEC filings. Nowhere in the documents is it more perplexing than the sections on financings. If an investor is unable to connect the dots for the capital structure of a company, it’s a plausible signal to move on. The same lesson is valid for all aspects of equity research.

Third Stream Model

Evaluating small and microcap companies developing advanced technologies and new applications requires different methods from those used in most traditional sell-side research.

The process calls for shifting from a model structured around old data and information (e.g., financial reports) to one that views each company as a unique organism whose value is predominantly an expression of its people and intangible assets, both unreadily quantifiable.

Innovation, intangibles and narratives form the 'Third Stream' – the backbone of our research on emerging growth companies. EGCs lack the fundamentals of larger firms. This is especially true for companies sporting market capitalizations below $1 billion. Analyzing smaller companies using the same metrics and processes is inadequate.

Third Stream’s research model for EGCs is organized as a latticework with innumerable connecting nodes. Analysis for each company takes on a pattern dependent on findings of an initial SWOT analysis (strengths, weaknesses, opportunities, and threats) and factors such as life-cycle stage, capital structure, industry trends and competition.

Here’s the simple framework of our research model:

The latticework branches out in all directions from this framework. It consists of a combination of data, organized information and, most importantly, diverse sets of questions seeking answers to construct a knowledgebase about the company.

We set out to trace the process of value creation from the basic economic forces that shape a company's performance – sales, costs, and investments – to the resulting impact on value drivers. Qualitative factors, alternative data, comparative analyses, and market intelligence from diverse stakeholders, including customers, partners, suppliers and competitors together form a coherent picture.

As we underscored last week, the primary aim is evaluating the ability of individual companies to execute in the face of complex technological, economic and market risks and challenges.

Stories drive valuation for EGCs. They are most effective when compelling details weave together with revealing signposts to form larger narratives. Value drivers like a culture of innovation, unique assets or proprietary technologies, a strong competitive position, attractive growth markets, loyal customers, a rising market share, and impactful catalysts interact together to multiply shareholder value over time.

News & Trends

Artificial Intelligence: From Winter to Spring

In a new book titled “Rule of the Robots: How Artificial Intelligence Will Transform Everything”, author Martin Ford explains how deeply artificial intelligence (AI) has been integrated into the infrastructure and business models of the largest technology companies over the past few years:

“These companies have seen significant returns on their massive investments in computing resources and AI talent, and they now view artificial intelligence as absolutely critical to their ability to compete in the marketplace. Likewise, nearly every technology startup is now, to some degree, investing in AI, and companies large and small in other industries are beginning to deploy the technology. …”

I had read Ford’s 2015 book “Rise of the Robots,” in which he delivered a clear-eyed economic analysis of automation and various scenarios for major industries and the global economy. In between these two books, he interviewed 23 of the most experienced AI and robotics researchers in the world for “Architects of Intelligence”.

In “Rule”, Ford brings up the sensitive issue of ‘brittleness’ in an AI system, which refers to inflexibility and an inability to adapt to even small unexpected changes in its input data. It turns out that AIs are not capable of a task of mental rotation that even a young child could do.

An IEEE Spectrum article on the limits of AI lists just a few of the “numerous troubling” cases where brittleness occurs:

“Fastening stickers on a stop sign can make an AI misread it. Changing a single pixel on an image can make an AI think a horse is a frog. Neural networks can be 99.99 percent confident that multicolor static is a picture of a lion. Medical images can get modified in a way imperceptible to the human eye so medical scans misdiagnose cancer 100 percent of the time. And so on.”

One possible way to make AIs more robust against such failures is to expose them to as many confounding ‘adversarial’ examples as possible, according to computer scientist Dan Hendrycks at the University of California, Berkeley. However, they may still fail against rare ‘black swan’ events. “Black-swan problems such as COVID or the recession are hard for even humans to address – they may not be problems just specific to machine learning,” he notes.

A broader issue raised by Ford is scalability in AI and limitations of simply throwing more computing resources at a problem. As long as companies and researchers approach AI as an arms race (i.e., the most firepower wins), there is less incentive to invest in the much more difficult work of true innovation.

Ford draws a comparison to Moore’s Law. For a long time, the doubling of computer speeds every two years was scripture, with chip manufacturers cranking out ever faster versions of the same microprocessor designs from companies like Intel and Motorola.

“In recent years,” says Ford, “the acceleration in raw computer speeds has become less reliable, and our traditional definition of Moore's Law is approaching its end game as the dimensions of the circuits imprinted on chips shrink to nearly atomic size.”

Ford says this has forced more ‘out of the box’ thinking, resulting in innovations such as software designed for massively parallel computing and entirely new chip architectures – many of which are optimized for the complex calculations required by deep neural networks. He expects “the same sort of idea explosion to happen in deep learning, and artificial intelligence more broadly, as the crutch of simply scaling to larger neural networks becomes a less viable path to progress.”

Leveraging A.I. in the Enterprise – High Growth for SaaS Firms

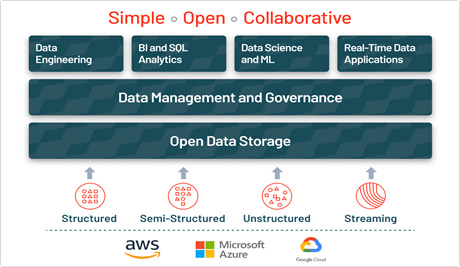

One of the hottest private tech firms around is Databricks. The company, which makes open source and commercial products for processing structured and unstructured data in one location, views its market as a new technology category.

For Databricks, advanced analytics and machine learning on unstructured data is one of the most strategic priorities for enterprises today. With the ability to ingest raw data in a variety of formats (structured, unstructured, semi-structured), its ‘data lake’ aims to be the foundation for this new, simplified architecture.

A data lake is a central location that holds a large amount of data in its native, raw format. Compared to a hierarchical data warehouse, which stores data in files or folders, a data lake uses a flat architecture and object storage to store the data.

Databricks CEO and co-founder Ali Ghodsi believes that combining structured and unstructured data in a single place – with the ability for customers to execute data science and business-intelligence work without moving the underlying data – is a critical change in the larger data market.

Big investors concur. On August 31, Databricks completed a $1.6 billion Series H funding round that boosted its valuation to $38 billion – a $10 billion increase since February when it added Amazon, Google and Salesforce as investors in a separate $1 billion capital raise.

Databricks has raised nearly $3.6 billion to date and is expected to generate $1 billion or more in 2022 revenue, growing 75% year over year. The company says its annual recurring revenue (ARR) has climbed to $600 million, up from about $425 million the prior year.

That’s quick for a company of its size. According to the Bessemer Cloud Index, top-quartile public software companies are growing at around 44% year over year. Those companies are worth around 22x their forward revenues. At its new valuation, Databricks is worth a hearty 63x its current ARR.

Later this year it is expected to stage the largest-ever IPO of a software firm. The deal would be larger than that in late 2020 of Snowflake (SNOW), its most serious rival, which will set up a battle over the next few years.

“Whereas most of Databricks’ code is open-source, Snowflake’s is proprietary, reports The Economist. “And whereas Databricks has mostly stuck to a ‘land-and-expand’ strategy, whereby small software deals grow into bigger ones, Snowflake practices a more conventional top-down sales model that focuses on big deals from the start.”

Snowflake, which recorded last 12 months (LTM) revenue of $851 million (yoy growth: 111%) has a market cap of $94 billion and an enterprise value of 106x LTM sales. While SNOW is still reporting losses, Databricks has not disclosed its bottom line.

Docebo (DCBO) is also an AI-enabled SaaS firm, publicly traded since completing its IPO on the Toronto Stock Exchange in October 2019, before getting listed on NASDAQ in December 2020.

The company offers an enterprise-facing e-learning platform. It says its portfolio of e-learning solutions allows companies to create content using AI as well as handle course enrollment and lesson delivery, all accessed with cloud-based software. Founded in 2005, Canadian-based Docebo launched an open-source model that could be installed on enterprise servers and in 2012 the company transitioned towards a cloud-based SaaS business model.

It claims to be the first company to leverage AI and transform corporate e-learning into a competitive advantage for clients who can access data-driven insights and enhance user experience.

By almost any measure, e-learning is booming. According to a 2021 U.S. government report, the demand for e-learning is likely to leap from just 5 percent of all students in higher education in 1998 to 15 percent by 2002. In the corporate sector, spending on employee training last year totaled $2.5 billion, about 40 percent of which went to on-line education.

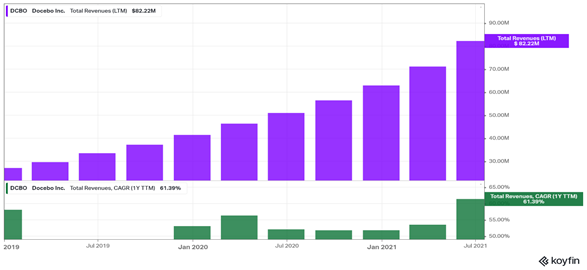

Docebo’s sales have climbed from $17 million in 2017 to $61.9 million in 2020. In the last 12 months, sales have touched $82.2 million and are forecast to reach $103 million in 2021 and $140.6 million in 2022.

For the Q2 2021, DCBO generated revenue of $25.6 million, an increase of 76% compared to one year ago. Subscription revenue was $23.6 million (92% of total revenue), an increase of 76% year-over-year. Despite a gross margin of over 80%, DCBO remains unprofitable, albeit narrowing, as it continues to focus on top-line expansion.

DCBO shares are up 21% year-to-date and double since its March 25 low, giving it a $2.7 billion market-cap. It’s noteworthy that Docebo’s enterprise value is up to 30.8x LTM sales versus the 5.4x run rate for 2019.

Act of Discovery

Animating the COVID-19 Vaccine

A recent tweet from Larry Brilliant MD, reads as follows:

“Hey—from your friendly neighborhood epidemiologist professor. This Family Guy cartoon should be used in all college epidemiology courses to supplement textbooks. Hell. Maybe INSTEAD of some textbooks. Great job @SethMacFarlane”

I took this seriously because Brilliant is an epidemiologist, technologist, philanthropist, and author who helped to successfully eradicate smallpox in the mid-70s, among a long list of his other accomplishments.

Here’s the video titled “Family Guy COVID-19 Vaccine Awareness PSA”:

To dig a little deeper, these sites provide lots of solid information on COVID-19 vaccines:

Mayo Clinic: COVID-19 vaccines: Get the facts

Johns Hopkins Medicine: COVID-19 Vaccines: Myth Versus Fact

If you’re feeling ambitious, a Sept. 14, 2021 article in Nature magazine, “The tangled history of mRNA vaccines”, offers a great accounting of the many experiments with messenger RNA over decades and how they led to two of the most important and profitable vaccines in history: the mRNA-based COVID-19 vaccines given to hundreds of millions of people around the world. Global sales of these are expected to top $50 billion in 2021 alone.

The story, according to the author, “illuminates the way that many scientific discoveries become life-changing innovations: with decades of dead ends, rejections and battles over potential profits, but also generosity, curiosity and dogged persistence against scepticism and doubt.”

See you next week, and thank you for your support.

Josh

Disclaimer

The content provided in this newsletter is intended to be used for informational purposes only. It is important to do your own analysis before making any investment based on your own personal circumstances. You should take independent financial advice from a professional in connection with, or independently research and verify, any information that you find on our Website and wish to rely upon, whether for the purpose of making an investment decision or otherwise.